Why You Should Do a Dry Run Every Time You Test Remotely

The UX industry is all about making a better product—improving the apps and sites and games that users rely upon every day. Whether it’s tiny tweaks or a massive overhaul, the goal of any UX professional is to improve their company’s product.

But for most UX researchers, the product isn’t the only thing they aim to improve; they also strive to improve the tests they're conducting. Anyone who’s done some user testing knows how easy it is for a user to accidentally stumble off track if the tasks aren't written well. A misunderstood phrase or an overlooked question can sometimes derail a tester in completely unexpected ways—and if they are testing in a remote, unmoderated environment, there’s no way to get them back on track... other than by writing a solid test plan in the first place.

How to test your test

The UserTesting Research Team has learned that one of the key ingredients of a great test is performing a dry run of the test script. (This is sometimes known as a “pilot test” as well.) We have one user go through the script, and then we watch the video as we normally would, keeping an eye out for any possible improvements to the script.

We check to make sure that:

- The user answered all of the questions that we needed answers to

- We didn’t accidentally use any confusing terms or jargon that would make a user stumble

- The user evaluated the right pages

If not, we adjust our test plans accordingly and try again. This way, we can ensure that our test plan is producing high-quality feedback from users and providing truly actionable insights for our clients.

What to check for in the dry run

Here are 5 basic questions to ask yourself when reviewing a dry run.

1. Do my tasks make sense to users?

Imagine your job was to set up a study across multiple devices (desktop, tablet, and smartphone). Your desktop dry run went smoothly, so you ordered the rest of your tests. A week later, you're knee-deep in analysis, and you watch a very confused tester attempt to hover over the links at the top of her web page... on her tablet.

You realize (too late) that you forgot to update the language in your tasks based on the device being used. It’s an innocent mistake (one that most remote researchers have probably made at one time or another). But it could make the user become frustrated and confused... not because of the product she was testing, but because she wasn’t able to complete the task you assigned to her.

In a remote usability test, ultra-clear communication is especially important.

In an in-person usability session, this mistake could be easily addressed and corrected, but when you and your users are in a remote situation, successful communication is a must; a misphrased task can send a user into stress mode, diminishing their ability to complete the task and compromising the value of your research.

While you watch the dry run, really listen to how the user reads your tasks and instructions.

- Do they understand all the terminology used?

- Are they providing verbal feedback that directly answers your questions?

- Is there ever a time when you wanted them to demonstrate something, but they only discussed it?

Often, a simple edit to your tasks can keep users on track. If they misunderstand your terms, rephrase them! If they misunderstand your question, reword it! If they misunderstand your assignment, rewrite it!

Simple adjustments like these can make the rest of the videos more helpful to you (and---bonus!--- you’ve learned a little something about how to improve your task-writing skills).

2. Are my questions being adequately answered by the users?

Sometimes, it’s tempting to pose multiple questions to users inside the space of one task. It’s certainly efficient, and many times, it’s the right call.

For example, in my own usability studies, I’ll often start a user off by having them explore the app/site as they normally would, and I'll pose the following questions in a single task:

"What can you do here? Who is this site/app for? How can you tell?"

In this particular case, I’m using the questions to prompt users into discussing their observations and overall understanding of the site/app. I don’t need the answers to THOSE EXACT questions; I just need the users to talk out loud about what they see and the connections they are making to the content they are reviewing.

However, if I’m interested in whether or not the Shopping Cart charges are clear, and if the user perceives the site as trustworthy, I probably wouldn’t combine tasks or questions regarding those topics.

I need more detailed answers on both of those topics, and squeezing them into a single task is a guaranteed way to split the users’ focus, resulting in less detailed feedback in both areas.

Ask just one question at a time to get the most detailed feedback.

If you’re not sure if/when to combine certain questions, the dry run can help you decide.

- Do the user’s answers provide enough detail?

- Does the user provide quality sound bites than can be clipped and shared with others?

- Does the user adequately address your goals and objectives for the study?

If not, consider breaking up these questions into individual tasks before launching the full study.

3. Are my users able to complete any absolutely-required steps, like logging in to a specific account or interacting with the right pages?

There’s nothing worse than sitting down to watch some recordings, only to discover that all the users checked out the live site instead of the prototype, or couldn’t complete the login because of a glitch on your app! Replacing those tests can delay the completion of your research... and be a bit of an ego drain.

Quickly reviewing a dry run video can warn you of any areas where your script needs to provide a little bit more direction. Often, just an extra sentence or a well-placed URL can be the difference between successfully testing the product and completely missing the mark.

4. Are any and all links in my script functioning properly?

Since we’re already talking about links...

A dry run is a perfect opportunity to verify that all the URLs included in your test are useful to the user and functioning properly.

This is especially important if you’re collecting feedback page-by-page, comparing different versions of an app, or testing among competitors. In those situations, there are so many sites to juggle, and it’s important to make sure that users are visiting and referring to the right pages.

5. Are my screener questions really capturing the users I need?

Some tests call for a particular user group. For example, the physician’s portal of a medical care application needs to be tested by doctors, or a human resources website needs to be explored by HR representatives.

When the type of user is important to your study, the dry run is a great way to tell if your screener questions are capturing the right segment of the population. Start the test by having the user explain their position in the industry or in their career, and if the answer indicates that they aren’t actually your exact target user, then you can re-shape the screener questions so that they capture the exact type of user you need.

Once you have a successful dry run

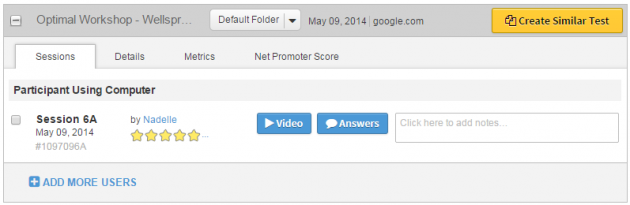

So let’s say you perform a dry run, and things go really well. The User Testing dashboard makes it unbelievably easy to add more users to the test and get the ball rolling: just click ADD MORE USERS underneath the first session.

If your dry run went perfectly, you can just add more users to the study. If not, you can create a similar test, edit the script, and try again!

If you need to make significant tweaks to your test plan, however, simply click Create Similar Test, revise the draft as needed, and launch again. If you’re confident that your changes will work, you can release all the sessions in your study... but of course, another dry run probably wouldn’t hurt.

Remember to ask yourself the above questions as you review your dry runs, and you’ll be amazed by how much the feedback on your product improves! Better feedback will lead to better product improvements, better user experiences, and better test plans in the future.

Happy testing!