Designing with confidence at scale: How AI is changing the way UX teams generate and share insight

Bobby Meixner

Vice President of Solution Marketing, UserTesting

AI is rapidly reshaping how design teams work—but the real opportunity isn’t automation, it’s confidence. Drawing on original research with design and UX leaders, this session reveals how high-performing teams are using AI to move faster, reduce uncertainty, and make stronger design decisions earlier in the process.

- Understanding where AI delivers the most value across workflows—from feedback collection and synthesis to insight sharing

- Highlighting where human judgment remains essential

- Turning validation into a natural part of everyday design work rather than a handoff

- Scaling insight without scaling complexity—and ensuring AI investments translate into real organizational impact

Speaker 1

Hi, everyone.

I would like to start off today's presentation somewhere very specific. Let's go back let's go back to June twenty seventh nineteen sixty seven, North London outside of, Barclays Bank on on High Street. There's a small crowd gathering there because something a little bit strange...

Speaker 1

Hi, everyone.

I would like to start off today's presentation somewhere very specific. Let's go back let's go back to June twenty seventh nineteen sixty seven, North London outside of, Barclays Bank on on High Street. There's a small crowd gathering there because something a little bit strange was about to happen. A guy by the name of Reg Varney, he's a sitcom actor, he's wearing a cardigan, he's got a golf hat on, He goes up, he walks up to a wall. He walks up to the wall, he's got a little piece of paper in his hand, takes his paper voucher, puts it in a drawer, enters his four digit pin, and out pops ten pounds.

The first ATM.

The first ATM, right? There was no teller, there was no signature, there was no captcha code, you didn't have to look at the little boxes and identify where the traffic lights were.

There was nobody behind the counter asking him if he is really actually who he says he is. Right? It was just a voucher, a piece of paper that was actually mildly radioactive so that the machine could actually recognize it.

When you think about that moment, somebody had to design that moment and before they did somebody had to go through and do the work to actually defend it. Right? Somebody, if you think about it, had to sit in a room full of bankers who were probably fairly conservative and say, you know what? I think people are gonna walk up to a wall and put a radioactive piece of paper into a drawer and trust that they're going to actually get their money.

And they knew that. They had the evidence.

Two thousand seven, right?

Somebody had to convince a company, in this case it was perhaps Steve Jobs, right? And then an entire world that people would take the entire internet and carry it around in their pocket on a piece of glass that's really about the size of a playing card.

Twenty ten.

Somebody had to defend the idea that strangers would willingly get into each other's cars on purpose all based upon a star rating and a photo that you can't necessarily quite make out who this person really is.

Somebody also had to argue that people would determine who they would fall in love with or at at least perhaps who they would meet just by swiping their thumb. Right?

So what do all these moments really have in common? Right? The the common thread here is that none of this actually started with technology. Right?

It started with people who really deeply understood people, deeply understood people well enough to imagine something that they didn't know they needed, they needed and had the ability to actually go through and defend it and tell it truly existed. Right? That person was a designer, that person was a researcher, that person was a product owner, that person is a builder and creator as many people are saying right now. Somebody in a room really frankly just like this one.

When you think about the job though, it's really the same every time. It's really to understand how are people thinking? How are they feeling? And what do they really need?

And it's it's the ability to be able to do that and be able to defend that choice to a group of stakeholders, a group of people that maybe actually haven't truly seen it yet. And that is really the craft, that's the craft that everybody in this room practices and it's older than any of the tools that any of us have been using forever. Right? It's really what put those first time moments that I just talked about into existence.

But today, we are all here because there's a lot of stuff that is changing. Everything is moving really, really quickly.

But when you think about it, the moments that I just walked you through, those took decades. Decades of networks, of teams, of research, of arguments, of rewrites, and that really just isn't necessarily the timeline that most organizations are operating on.

We have these tools, right? These tools and dozens like them mean that really a single person, right, can go through and build a complete application that used to take hundreds, right? A designer without a PM, a PM without an engineer, you name it, right? Any combination of those and really for the first time in history, one person can truly build just about anything.

Which all sounds amazing until you take a step back and you realize, well what were those hundreds of people really doing? What did they used to all do together? What do they still do together? Right?

They pressure test each other. They challenge each other's assumptions. Right? They actually take a second and pause and say, wait, somebody actually really gonna use this?

Is this the right problem to solve?

Are we actually doing the right things here?

But all of that is changing as well and it's all happening really really fast. Right? The question really isn't can we build faster? Can we create things faster? We know that we have the ability to do that.

The question is really the same question that the ATM designer had to answer, the Uber designer had to answer, the Tinder designer had to answer. Right? And that's these questions. That's the questions that everyone is facing right now.

Can I trust this? Can I defend this? Can I stand up in front of a group of people and actually feel confident that we are doing the right thing? So how do we all feel about that?

So we came out with this report a little over a month ago, Defensible Defensible Design in the Age of AI, to help start to get answers to these questions. So you can take a picture, grab the QR code, you can download the full report. We're gonna talk through some of the findings, you can obviously come and check out our booth to get additional detail. I saw some phones go up if we need the QR code one more time, there we go. Didn't wanna cut you off there.

Alright.

Okay. So what do we do? We surveyed a hundred and eighty three designers across United States and Europe, UK, France, Germany to really understand, right, how AI is changing their work and more importantly, how is their confidence in their outputs actually changing. So let's take a look at what we found.

So right off the bat, this is probably really no surprise to people. Right? Ninety one percent of designers report that they are working faster in AI enabled environments. What's interesting though is only fifteen percent say that they feel much more confident about the output of their work.

So that is a six to one gap between how fast we're moving and how good we feel about what we're actually doing.

When you think back to the team and the individuals that perhaps designed the ATM back in the sixties, what did they do? They were slow, right? They were painfully slow. They probably had they they had dramatically different tools and standards, but when they stood up in front of their board and their management, they knew that they had done the work. And this evidence is telling us that perhaps we flipped it a little bit. We're moving fast, but we're not necessarily sure if we're doing the right things.

If we take a look at confidence and break it down a little bit further by stage, the story starts to sharpen up a little bit. When we take a look at the early stages around discovery and ideation, designers, they're feeling pretty confident. Right? We've got a one a four point one, out of five. So AI, great brainstorming partner, great synthesizer, great first draft, right? We we're kinda solving the blank page problem.

But when we get further into the cycle, right? When it starts to get down to making the final call, when we're actually gonna spend money on this, put this in front of our customers, how is this going to affect our reputation, are people going to like it, that's when things start to break down a little bit. Confidence starts to drop. So this could be referred to essentially as the reversibility factor. Early in the cycle, confidence is high because it's much easier to go through and change and and undo things, right? But as we get further down into the cycle, it becomes less reversible or or less easy to do that.

If you think about getting in a stranger's car, the Uber example, that's pretty irreversible. Right? Somebody had to go through and really truly be sure.

So, if we look at, if we continue looking at the numbers here, here's an interesting one. This is one of the more uncomfortable numbers in the report. Sixty five percent say that, they can't confidently say that their outcomes are better because of AI. Thirty seven percent say the results are, hey, maybe they're about the same.

And then nineteen percent say that actually, you know what, I think I'm getting some AI slop on my hands, so, they weren't loving that. So we're moving faster, right, than ever before, but by some of our own reporting, it's not necessarily changing for the better.

Okay. So, if things aren't necessarily getting better, if speed isn't necessarily improving our outcomes, who is accountable when, they don't? Right? So, if we take a look at this data, it's very clear, people aren't really sure. We don't really necessarily know where the accountability lies.

And all of this starts to feel perhaps a little bit risky. Right? How do designers feel about risk? What's interesting is they're actually fairly evenly split. Thirty eight percent say it increases risk, the other thirty eight percent say that it actually decreases risk.

What's more interesting is how they are split, right? When you look at the group that says that they feel that risk increased, how are they validating their decisions? How are they defending their decisions? What information are they really using to do that? Most of them are relying on peer discussion and critique, the people that may not actually want to tell you that your idea sucks or they might tell you that everything's great.

Validate they validate less frequently, they do less usability testing and they're actually more likely to skip it altogether versus the group that feels like it decreases risk. What are they doing? They're doing the work. They're cross checking it. They're doing the research. They're running usability tests. They're taking a look at the data before they just roll things out.

Alright. So where does this lead? Right? How do we how do we keep this on the right track?

I'm not gonna go through each of these. These are in the report. You can download them. You can take a look.

Just calling out a few things.

Prioritizing number one, defensibility over speed. Just because it's fast, obviously, doesn't mean that it's good.

Number four, clarify decision ownership. If we go down this route, who's ultimately taking responsibility? Is it a specific person? Is it a team?

Let's be a little more specific around who's actually, accountable for this. And number six, it's protecting human judgment. It's protecting your craft. It's protecting the ability to actually conduct proper research and gather insights in the right way.

It's protecting the designer's craft of making the judgment calls around what actually looks good. Right? You can't teach taste in a way.

Okay. So what does this actually look like then in a world where people can actually go through and build and do almost anything? Everyone is using generative AI tools. It doesn't really matter necessarily what their roles are.

Let's pick up with a fictional company, ThreadLine. Think of them like our restoration hardware. We're gonna follow two individuals. One is an insight seeker, insight or an insights professional, right, UX researcher, Jordan.

The other one, you could consider this individual a builder or a creator. Maybe she's a designer, maybe she's a PM, it could be really a variety of things. Let's take a look at how they use generative tools to accelerate their work while also protecting their craft.

So we start off with Jordan and Jordan is solving the big problems. She's providing strategic insights to her organization and she's using a generative AI tool to do that. So think of this like maybe a custom GPT, it's an internal insights layer that's across their entire stack and she goes through and she wants to get ahead of things, so she's asking, you know, what's changed our luxury home shoppers behavior over the last two quarters? She wants to get ahead of some emerging trends.

So the LLM goes out and is connected to lots of different data sources within the enterprise. It's pulling information from user testing to understand how people feel about things and why. It's pulling in analytics from Amplitude and Shopify and discussions that they might be having within their team across different collaborative tools. And when she looks at the data, she can see that there's a thirty one that that abandonment mid funnel has climbed from thirty one percent to fifty eight percent.

That's way up based upon analytics that's coming from Shopify. Why? Right? Well, there are twenty four folks through video interviews saying verbatim that they're questioning whether something's going to fit in their room. So scale anxiety is going up and something needs to change. So she's working with her inside assistant here to determine what's going on and the inside assistant lets the researcher make the call. Is this a design problem or is this a content problem?

Jordan thinks it's a design problem, so what she does is she's going to start working with Maya. She's going to draft a brief, they're going to brief so that they can go through and build something, test it out. So she's worked with her generative tool to create the brief and they're going to figure out, you know, can a visual first room assistant reduce the mid flow abandonment by eliminating some of the scale anxiety that folks have. So let's perhaps test something out where people can upload a photo of their room and and and get a visual of it.

Okay. Great.

Let's pick up now on the builder and creator side. We're over here working as Maya and she's working in a tool and this is kind of just an an anonymized mock up, right? Say it's a Claude design, maybe it's a Figma make, you name your your favorite generative design tool and that's it's automatically pulled in the information that Jordan has prepared for her And Maya's like, hey, let's whip this up. Let's see if we can get a visual shopping assistant going to test this out.

But you know what? We don't want to burn through a ton of tokens. We want to make sure that the first go at this is actually based upon real context. The all the information that we have available to us within our enterprise. So we can go through and we we can take a look at clips of what people have been saying and doing and why on the site. We're leveraging data from a variety of different sources, right, analytics data to determine what's going on here.

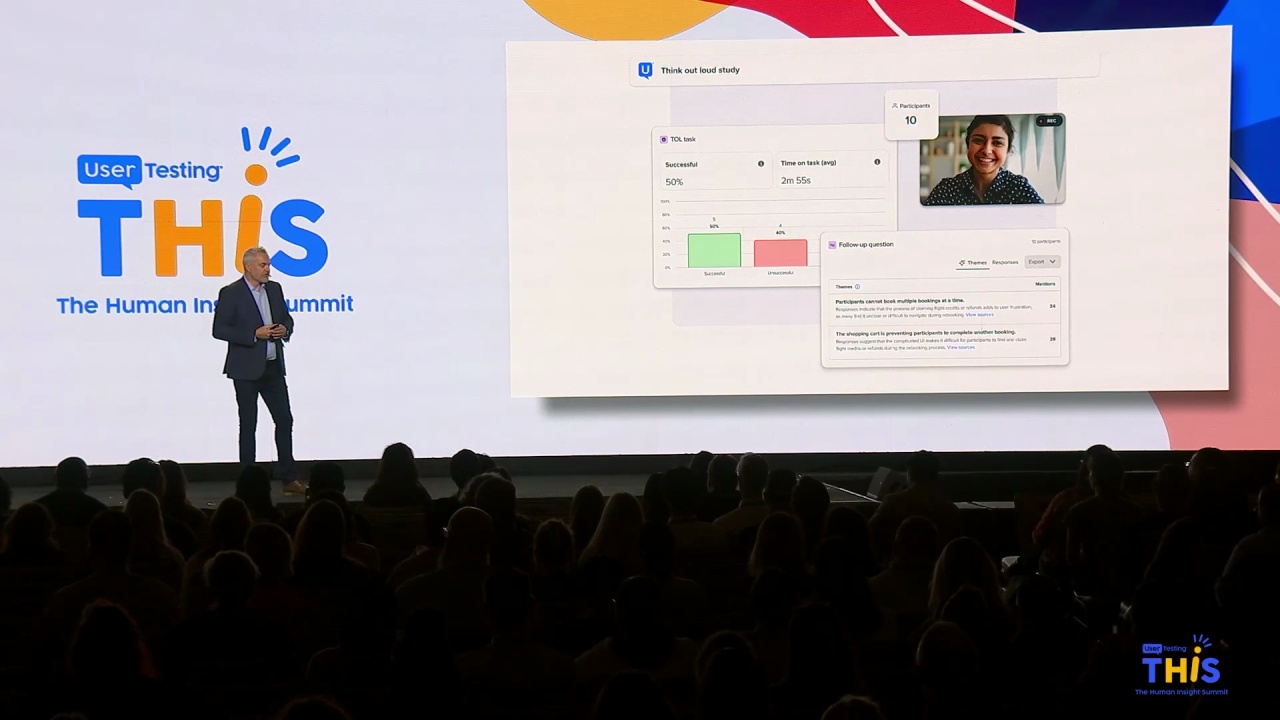

So the generative tool goes through, develops a few iterations, we've got one, we've got two, we've got three, okay, we're whipping through them, and it's like okay before we go through and roll this out, we need to make sure that we kind of get some eyes on this, what do people actually really think. So here we're gonna go through and go out and run a test. Let's go get twenty people and figure out exactly within our target audience what they think of this.

So this design tool is connected to an application like user testing. Drafts a draft test. The designer has the ability to go through and determine, okay, what do we want to ask? I can change the tasks, But the designer's not a researcher.

Right? We wanna make sure we're doing this right. So this gets passed back over to Jordan. So Jordan can make sure that they're finding the right people, that the incentives are right, that the that the insights that they're going to build from are actually quality and she can come in here and take a look at the audience and approve and reject people that are actually going to give feedback on this.

Great. She approves it, the feedback's coming in, that all looks good. Okay, they have the ability to go in and take a look at it and now the recommendations come back within the generative application that the designer is using with some of the ways that the design should be tweaked. So the designer, Maya can go through and choose which recommendations that she wants automatically incorporated into her design. That moves forward, great, we've applied those changes and she might go through and make some manual tweaks here as well, right, makes make some adjustments based upon her own instinct, but all of this again has to be somebody has to defend it, right? So the tool goes through and compiles a report that they can share with leadership around the problem they were solving, how they're trying to solve it, what they're doing, and then what are they actually going to be watching out for once this launches to make sure that they continue down the right path.

So this is the type of application and experience, right, that is conceptual but certainly possible and many organizations are doing this today and they're actually making a significant impact on their business, right. They're driving growth, they're innovating faster, they're reducing risk, good things are happening.

Alright. So wrapping up. We started in nineteen sixty seven, right at that wall in London, the first ATM.

And somewhere right now, perhaps, maybe some of you that are in this room are working on that next big idea. The next ATM, the next iPhone, the next Uber, right? The next thing that in ten years we're all going to be looking at each other and having a hard time imagining life without.

And we'll all be using tools that frankly did not exist two years ago.

The speed is unreal, right? The pressure is absolutely real and valid, but when you think about the job, the actual job that everybody in this room is sitting there and doing, that really has not changed since that man walked up and took cash out of the first ATM. And that job is to understand people deeply enough, right, to imagine a world that exists before they realize they actually need it and the and they have the ability to actually defend that choice.

That's not something that AI can do for any of us. Right? That's all part of what we do.

So there's one more thing that I will leave you with before we head to lunch. I know we're a little, we're running a little bit over time. Perhaps some of you noticed these photos were actually generated using AI. All of these first time moments were generated using AI.

Some of them look real. Some of them actually look very real. But you know what? There are some little things that are actually just kind of off.

Right? And who notices? It's all of us. It's humans. It's human insight. And that is what continues to be really valuable and that is the whole point.

So our booth, booth three, come visit us, have a conversation with our team, some of them are are sitting here, see how many imperfections that you can that you can point out in our photos and Angie's been working, she's got a fun little giveaway for the person who can find the most imperfections. And when you do that, you're going to prove to yourself that what you do, the very thing that you bring to the table, the human judgment that you have is still the scarce thing.

Thank you.

Speaker 2

Bobby, thank you. That was awesome. Thanks. This time, I'll start with a question for you. In one of the slides, you had mentioned that defend decisions is more important than velocity and prioritizing defending decisions.

And it said like and I'm I'm just wondering like if and it said something around like what good looks like. And I'm wondering if you have any perspective on has the definition of good changed in the last year or so? Like have we gone from rigor to good enough and that is what the new definition of good is?

Speaker 1

I think the definition of good has changed in certain circumstances, right? I think it depends on what the stakes are.

What is good and what is good enough? And who decides that?

That's that's up to all of us. Right? So, that's up to human judgment, that's up to human insight, that's up to experience and decisions in the context that we all have as individuals. Because we've all had those moments where we're using AI, we get an answer back and we might think it's really good initially and then we go back maybe a few hours later and we look at it again, it's like what was this? What was I thinking? It sounded so good originally or you know, like things shift and change. So it really I think it depends on what you're doing, it depends on what the stakes are.

Speaker 2

Cool. Yeah. Thank you. Any questions from the audience? Let's go there, see you. In the middle.

Speaker 1

I love that thing by the way. Had that is the first time I have seen the microphone, the plushy ball microphone. Whoever invented that, that was a good idea. What was the human insight that went into that?

Speaker 3

My name is Sejun from UX Design from SCAD.

I kinda have, like, two questions. First one is what would be the difference between defending your idea and convincing your idea? And the second question would be, do you think the data of people not satisfied with the outcome of AI, do you think it would change when they were able to tweak their prompts a little better in a way that AI can generate the better outcomes?

Speaker 1

Let me take your first question. Can only take one question at a time.

What's the difference between defending and convincing you said? I think it depends on your audience, right? It's understanding what's important to your audience, putting yourself in the stakeholder shoes.

So I can get up and defend anything and say any number of things, but if I'm talking to an audience that doesn't care about what I'm saying and what I'm using as my proof and evidence and what I think I'm going to impact, it all falls flat. It's really understanding not only who you're building for from a customer perspective, but who are your stakeholders and what do they care about, what are the numbers that they're gonna wanna move, what's gonna impact them, what's gonna get them hired, fired, promoted, etcetera so that you can present that data back to them and then become really valuable. And your second question?

Speaker 3

Is, do you think the data of people who are not satisfied with the outcome that AI gives you, do you think it will change when they're able to generate a better prompt to put in the AI?

Speaker 1

I think it does. Yeah. Yeah. It does. It's all relative. Sometimes people show me things and I'm like, what was the prompt that you used for this?

What happened?

Another question.

Speaker 2

Yes. Over here. Oh, there you go.

Speaker 4

Hi. My name is Grace Richardson. I'm also a student here at SCAD. We've been asking what the role of a designer is now with AI, but I want to pose a slightly different question which is what is the new role of a user with AI if we are testing through AI with AI questions, if we're testing AI personas?

Speaker 1

What is the role of a user with AI? I mean at the end of the day the user is trying to accomplish something and that doesn't necessarily change.

There there we're at the end of the day, right, we're all building for humans and I think that's really important to understand. We're optimizing for lots of things but at the end of the day, if you take the thread line example, somebody's got to sit somewhere, somebody's decorating their room, right? Somebody has some sort of outcome that they're trying to achieve, so it really comes down to truly understanding what is it that folks are trying to achieve and what's the best way forward and how to think through that in the most innovative and effective way.

Speaker 2

Alright, yeah over there.

Speaker 5

Hi there, my name is Harper. I'm also UX student at SCAD. And the data that was posed that was talking about the designer and my researcher confidence with AI output It was really interesting. I was curious about your thoughts on user confidence in design output, like what they are interacting with. Do you think that's going to change as kind of AI is implemented more in design processes?

Speaker 1

I absolutely think it changes. Yeah. We didn't necessarily run data on that, but I think we all know when we've seen something that's AI.

And when we like, when I see that I kinda start check out. I'm like, you know what, I I don't know. Like, it's the stuff you see on LinkedIn where you've got all the em dashes and that it's not this, it's that. And people recognize those patterns and they start dismissing those things.